Having seen Vladans Openfiler results yesterday I told him that I would re-run my tests using the same Iometer script that he used, just to make sure that the results he saw weren’t down to his hardware.

Unfortunately his results were good (or rather.. bad)

Following the testing routine he took from Maish’s website and using the script file from here I ran the following tests only.

4K; 100% Read; 0% random (Regular NTFS Workload 1)

4K; 75% Read; 0% random (Regular NTFS Workload 2)

4K; 50% Read; 0% random (Regular NTFS Workload 3)

4K; 25% Read; 0% random (Regular NTFS Workload 4)

4K; 0% Read; 0% random (Regular NTFS Workload 5)

It should be noted that each of these tests take 1 hour to perform and each test was carried out twice.

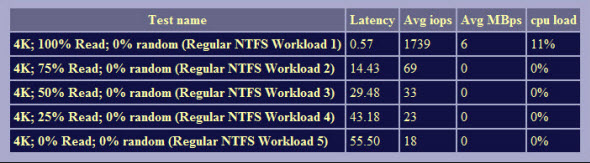

Openfiler Raid 5 NFS Results

As you can see, they aren’t a million miles away from the results that Vladan gets.

All in all some strange results.

Having spent the day doing the tests on the DSS v6 installation I can now present the results. Please note that these results are based on the unrevised test used above, new testing following Didiers suggestion (32 outstanding IO’s instead of 1) is now in progress.

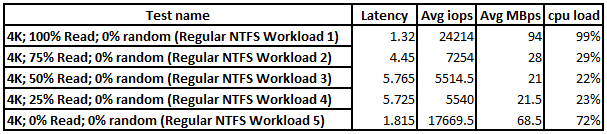

** Update #1 DSS Raid 5 NFS Results

Here you can see a massive improvement over Openfiler. Further results to follow.

** Update #2 DSS Raid 5 NFS Results with 32 outstanding IO’s

The above results reflect a change to the testing carried out previously, instead of testing with a single outstanding IO I have tested with 32 outstanding IOs. The reason for the change in testing is down to recommendations from Didier and testing with a single VM on a single host. The tests were run twice to get an average score.

What we can see from this is that DSS v6 is by far a faster product where NFS is concerned and it’s my opinion and advice that for those of you requiring NFS storage from a home brew NAS\SAN device perspective to seriously give DSS a look.

Comments

4 responses to “Additional testing for Vladan – Update #2”

Simon,

At least I’m not the only one having a weird feeling about the performance of Openfiler.

Hi Simon, Vladan,

By default the tests run with 1 single outstanding I/O.

If you are testing from one single VM on one single host accessing one single VMFS datastore, then you can increase that number to 32 and eventually 64 to see if there any improvement.

BTW IOmeter allows the specifications to be cycled with incrementing outstanding I/O’s, either exponentially or linearity. See Test Setup, Cycling Options.

With ESXi4.1 the queue depth varies between 32 to 64 depending of the type of protocol such iSCSI/FC and the HBA vendor as well.

Note that for NFS shares, better to use a IOzone than IOmeter.

Cheers,

Didier

Didier,

Thanks for your input on this, I will revisit my testing to include additional cycles and also include future testing with IOzone for NFS performance.

Vladan, for continuity purposes I am currently running the same IOmeter test on DSS v6 now with the idea of running both NFS tests using IOzone and IOmeter (with 32 outstanding IO’s). I will use a flat 32 instead of ramping it up.

Unless of course Didier thinks otherwise.

I’m not surprised with the results when you go from 1 to 32 outstanding IOs but this has a huge impact on the processor for some of the tests.

with 32 outstanding IOs, you will notice some delays between your writes and the NAS activity especially at the end of a write operation where an ACK is returned to the OS whilst actually the NAS is still flushing all outstanding IOs and caches to the disks…

Cheers,

Didier